之前学习了一段时间go之后想写一点小的项目,上网查询了一些资料,做了一些小的demo,今天将我最近写的小项目分享给大家:利用go实现单任务版爬虫和并发爬虫,因为go对并发支持的很好,所以我们只需要提前将goroutine和channl学好,就很好理解了

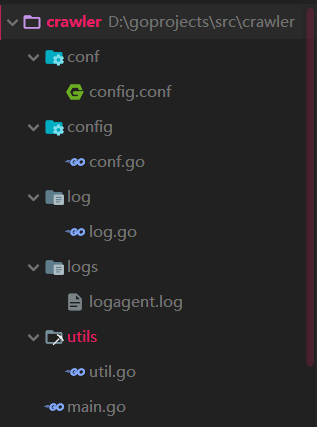

1 | 项目结构: |

regexp api:

re := regexp.MustCompile(reStr) : reStr代表正则表达式 ,这个方法表示按照这个正则去匹配 re.FindAllStringSubmatch(srcStr,-1) : -1表示所有全查到 (1或2或3表示取1个2个3个)一些常用的正则符号

() : 标识分组 \w : 字母数字下划线 \d : 数字 \D :字母 \s\S : 任意字符 \s : 空白字符(小写s) \S : 非空白字符(大写S) re1 | re2 : re1 或 re2 所表示的片段 regexp*+? | regexp+? 分别表示1到多次 | 或0到多次 ?表示非贪婪,也就是匹配到?后面的东西为止 {n,m} | {n,} 表示 n个到m个 | 或至少是n个 [\w\.] 表示出现任意字母数字下划线或者 . 都是ok的

举例:

[\s\S]+?href 这段正则中: [\s\S] 表示任意字符 + 表示 0个或1个 ?表示不会隔过 href正则案例

这里以电话号为案例 rePhone = `(1[3456789]\d)(\d.[a-z]{2,3})(\d{4})` 匹配出是二维数组形式分四组,整体为第一组,有一个括号加1组快捷键

Alt + Shift + M 变为函数形式

Ctrl + Alt + L 格式化代码

Ctrl + Alt + V 变量名补全

- 示例代码:爬虫获取html界面内容

1 | func GetHtml(url string) string { |

- 项目结构

- 源代码

完整代码可以fork 我的github :

main.go1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

36

37

38

39

40

41

42

43

44

45

46

47

48

49

50

51

52

53

54

55

56

57

58

59

60

61

62

63

64

65

66

67

68

69

70

71

72

73

74

75

76

77

78

79

80

81

82

83

84

85

86

87

88

89

90

91

92

93

94

95

96

97

98

99

100

101

102

103

104

105

106

107

108

109

110

111

112

113

114

115

116

117

118

119

120

121

122

123

124

125

126

127

128

129

130

131

132

133

134

135

136

137

138

139

140

141

142

143

144

145

146

147

148

149

150

151

152

153

154

155

156

157

158

159

160

161

162

163

164

165

166

167

168

169

170

171

172

173

174

175

176

177

178

179

180

181

182

183

184

185

186

187

188

189

190

191

192

193

194

195

196

197

198

199

200

201

202

203

204

205

206

207

208

209

210

211

212

213

214

215

216

217

218

219

220

221

222

223

224

225

226

227

228

229

230

231

232

233

234

235

236

237

238

239

240

241

242

243

244

245

246

247

248

249

250

251package main

import (

"context"

"crawler/config"

"crawler/log"

"crawler/utils"

"fmt"

"github.com/astaxie/beego/logs"

"io/ioutil"

"net/http"

"regexp"

"strings"

"sync"

"time"

)

//爬取的一些正则

var (

//分四组,整体为第一组,有一个括号加1组

rePhone = `(1[3456789]\d)(\d.[a-z]{2,3})(\d{4})`

//reEmail = `[1-9]\d{4,}@qq.com`

// \w : 字母数字下划线

reEmail = `\w+@\w+\.[a-z]{2,3}`

/**爬取超链接*/

reLink = `<a[\s\S]+?href="(http[\s\S]+?)"`

/**爬取网站 图片*/

rePic = `<img[\s\S]+?src="(http[\s\S]+?)"`

/**爬取标题*/

reTitle = `<a[\s\S]+?title="([\s\S]+?)"`

/*爬取图片名称*/

reName = `<img[\s\S]+?alt="([\s\S]+?)"`

/**爬取图片url和图片alt名称*/

rePicAlt = `<img.+?src="(http.+?)".+?alt="(.+?)".*?>`

/**Alt正则*/

reAlt = `alt="(.+?)"`

)

/**

定义一些全局变量

*/

var (

pushChan sync.WaitGroup

wg sync.WaitGroup

)

func main() {

//加载日志配置

fileName := "./conf/config.conf"

err := config.LoadConf("ini", fileName)

if err != nil {

logs.Error("LoadConf filed,err = ", err)

return

}

err = log.InitLogger(config.Conf.LogPath, config.Conf.LogLevel)

if err != nil {

fmt.Println("load conf error")

panic("load conf failed")

return

}

url := "https://www.163.com/"

//url := GetHtml("https://tieba.baidu.com/p/6118291659?pid=126751186930&cid=0&red_tag=1970653863#126751186930")

//url := GetHtml("https://www.766ju.com/")

//url := "https://www.766ju.com/vod/html2/20124.html"

fmt.Println(time.Now(),":图片爬取开始!")

/**----------------------下载图片-------------------*/

//elems := GetUrls(url,reName)

//elems := GetImgInfos(url, rePicAlt)

//for _, str := range elems {

// fmt.Printf("Name = %s \n, url= %s \n", str["url"], str["fileName"])

// //异步下载图片

//

//}

//wg.Wait()

pushChan.Add(1)

go func(url, rePicAlt string) {

elem2Chan(url, rePicAlt)

pushChan.Done()

}(url, rePicAlt)

pushChan.Wait()

close(midChan)

fmt.Println(time.Now(),"图片爬取完毕!")

/**----------------------下载图片-------------------*/

/**----------------------从管道中读并下载-------------------*/

fmt.Println(time.Now(),"图片开始下载!")

for elem := range midChan {

wg.Add(1)

go func(elem map[string]string) {

DownLoadPicAsync(elem["url"], elem["fileName"])

wg.Done()

}(elem)

}

wg.Wait()

fmt.Println(time.Now(),"图片下载完成!")

/**----------------------从管道中读并下载-------------------*/

/**----------------------打印信息-------------------*/

//PrintInfo(url,reName)

}

/**

建立中间管道,爬到的图片扔进管道中

*/

var (

midChan = make(chan map[string]string, 80)

picChan = make(chan int, 10) //信号量最多为10

)

//每个图片实例放入chan : midChan中

func elem2Chan(url, reg string) {

elem := GetImgInfos(url, reg)

for _, str := range elem {

midChan <- str

}

}

/**

同步下载图片

*/

func DownLoadPic(url, subFileName string) {

resp, _ := http.Get(url)

defer resp.Body.Close()

imageBytes, _ := ioutil.ReadAll(resp.Body)

fileName := ""

if strings.Contains(url, "gif") {

//fileName := `D:\goImg\imgs\` + strconv.Itoa(int(time.Now().UnixNano())) + ".jpg"

//fileName := `D:\goImg\imgs\` + GetRandomName() + ".jpg"

fileName = `D:\goImg\imgs\` + utils.ReplaceName(subFileName) + ".jpg"

err := ioutil.WriteFile(fileName, imageBytes, 0644)

if err != nil {

fmt.Println("下载失败,err = ", err)

}

} else {

//fileName := `D:\goImg\imgs\` + GetRandomName() + ".jpg"

fileName = `D:\goImg\imgs\` + utils.ReplaceName(subFileName) + ".jpg"

err := ioutil.WriteFile(fileName, imageBytes, 0644)

if err != nil {

fmt.Println("下载失败,err = ", err)

}

}

fmt.Printf("下载成功,fileName = {%s}\n", fileName)

}

/**

异步下载图片

*/

func DownLoadPicAsync(url, fileName string) {

picChan <- 123

DownLoadPic(url, fileName)

<-picChan

}

/**

获取爬取到的每张图片的url

*/

func GetUrls(url, reg string) []string {

html := GetHtml(url)

//爬取逻辑

re := regexp.MustCompile(reg)

// -1 代表匹配全部 ,写数字机几就取几个

allString := re.FindAllStringSubmatch(html, -1)

fmt.Println("捕获图片张数:", len(allString))

imgUrls := make([]string, 0)

for _, str := range allString {

imgUrl := str[1]

imgUrls = append(imgUrls, imgUrl)

}

return imgUrls

}

/*

获取img+alt信息 返回map 数组

map{key1:url value1:string ; key2:fileName value2:string}

*/

func GetImgInfos(url, reg string) []map[string]string {

//爬取html网页

html := GetHtml(url)

//爬取正则

re := regexp.MustCompile(reg)

// -1 代表匹配全部 ,写数字机几就取几个

allString := re.FindAllStringSubmatch(html, -1)

fmt.Println("捕获图片张数:", len(allString))

//新建一个map切片

imgInfos := make([]map[string]string, 0)

for _, str := range allString {

imgInfo := make(map[string]string, 0)

imgUrl := str[1]

imgInfo["url"] = imgUrl

imgInfo["fileName"] = GetImgNameFromTags(str[0])

imgInfos = append(imgInfos, imgInfo)

}

return imgInfos

}

/**

判断fileName是用alt命名还是随机数命名

*/

func GetImgNameFromTags(imgUrl string) string {

re := regexp.MustCompile(reAlt)

res := re.FindAllStringSubmatch(imgUrl, -1)

if len(res) > 0 {

//res[0][1]取的是第 1行 第二列的元素 即alt标签中的中文命名

return utils.GBK2UTF8(res[0][1])

} else {

return utils.GetRandomName()

}

}

//Alt + Shift + M 变为函数形式

/**

爬取html网页

*/

func GetHtml(url string) string {

//控制超时

ctx, cancel := context.WithTimeout(context.Background(), time.Second*60)

defer cancel()

var html string

done := make(chan int, 1)

go func() {

resp, err := http.Get(url)

if err != nil {

logs.Info("http get failed, err = ", err)

}

defer resp.Body.Close()

bytes, _ := ioutil.ReadAll(resp.Body)

html = string(bytes)

time.Sleep(1 * time.Second)

done <- 1

}()

select {

case <-done:

fmt.Println("work done on time")

return html

case <-ctx.Done():

// timeout

fmt.Println("爬取超时:err= ", ctx.Err())

}

return html

}